The topic This hidden Proxmox setting may sound cursed, but it’s really useful for coding and… is currently the subject of lively discussion — readers and analysts are keeping a close eye on developments.

This is taking place in a dynamic environment: companies’ decisions and competitors’ reactions can quickly change the picture.

Having spent a long time with Proxmox, I’ve run into all sorts of obscure settings and performance tweaks – some acquired from random forum posts; others unearthed from issue threads on random Proxmox-centric GitHub packages. But the real gold mines were the seemingly cursed settings that, despite sounding unhinged, actually come with niche benefits, and in some cases, can be game-changers for your specific workloads.

Nested virtualization fits in the latter category, and while it’s fairly easy to set up, it’s not very popular in the PVE community. For the uninitiated, it’s the arcane art of running virtual machines inside other VMs, and while it has some massive deal-breakers, it completely changed how I worked on my coding projects.

If you’re a VM-hosting veteran, you’ve probably realized the biggest issue with nested virtualization – its massive resource hogging tendencies. Honestly, I wouldn’t sugarcoat it, either: Considering the massive overhead required for context switching in a nested VM, it’s far from ideal for budget-friendly rigs. Not to mention, running all tasks inside a cascading setup of virtual machines just adds an extra point of failure, namely the parent VM. Combine that with the fact that running VMs is already pretty taxing on your host rig, and you’ve got the perfect recipe for a sluggish experience on weak, RAM-deficient servers.

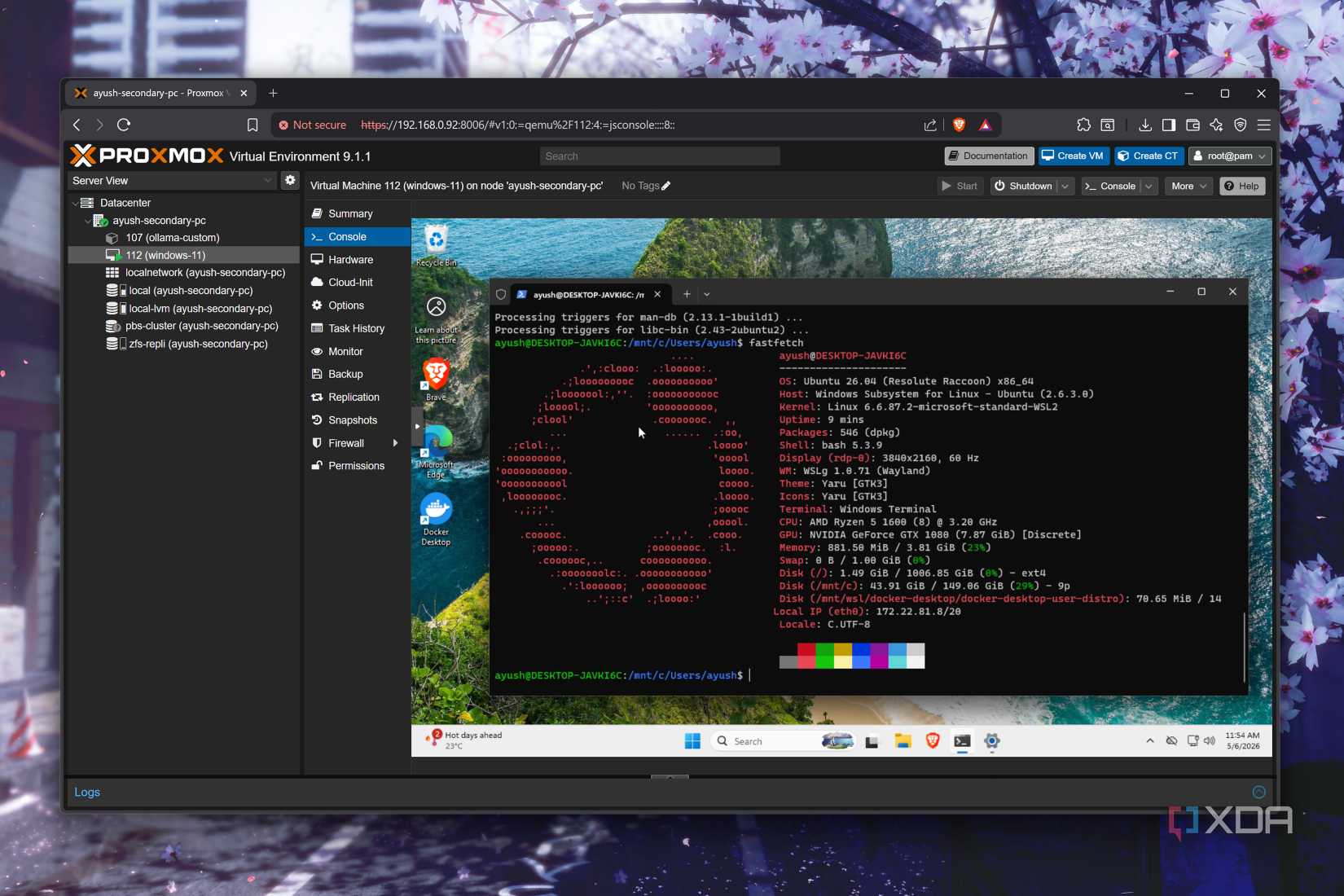

But with the right setup, you can use nested virtualization without too many adverse effects. Source? Yours truly, who runs dev VMs – instances that are powered by the king of unoptimized operating systems, Windows 11 – on an outdated Ryzen 5 1600 and a Xeon E5 2650 v4. Not only are these machines ancient by today’s standards, but the latter’s single-core performance is terrible for any Linux distro, let alone the likes of Windows 11.

You see, rather than running full-on Linux VMs via VirtualBox on my Windows 11 dev (virtual) environment, I use it to run WSL2 and Docker containers, both of which require nested virtualization to be enabled. Sure, there is some context overhead, but it’s not so draining that my old PCs can’t handle it. I use centralized virtual machines to create dev projects that I can access from every device in my arsenal without cluttering them with my unorganized file-creation habits.

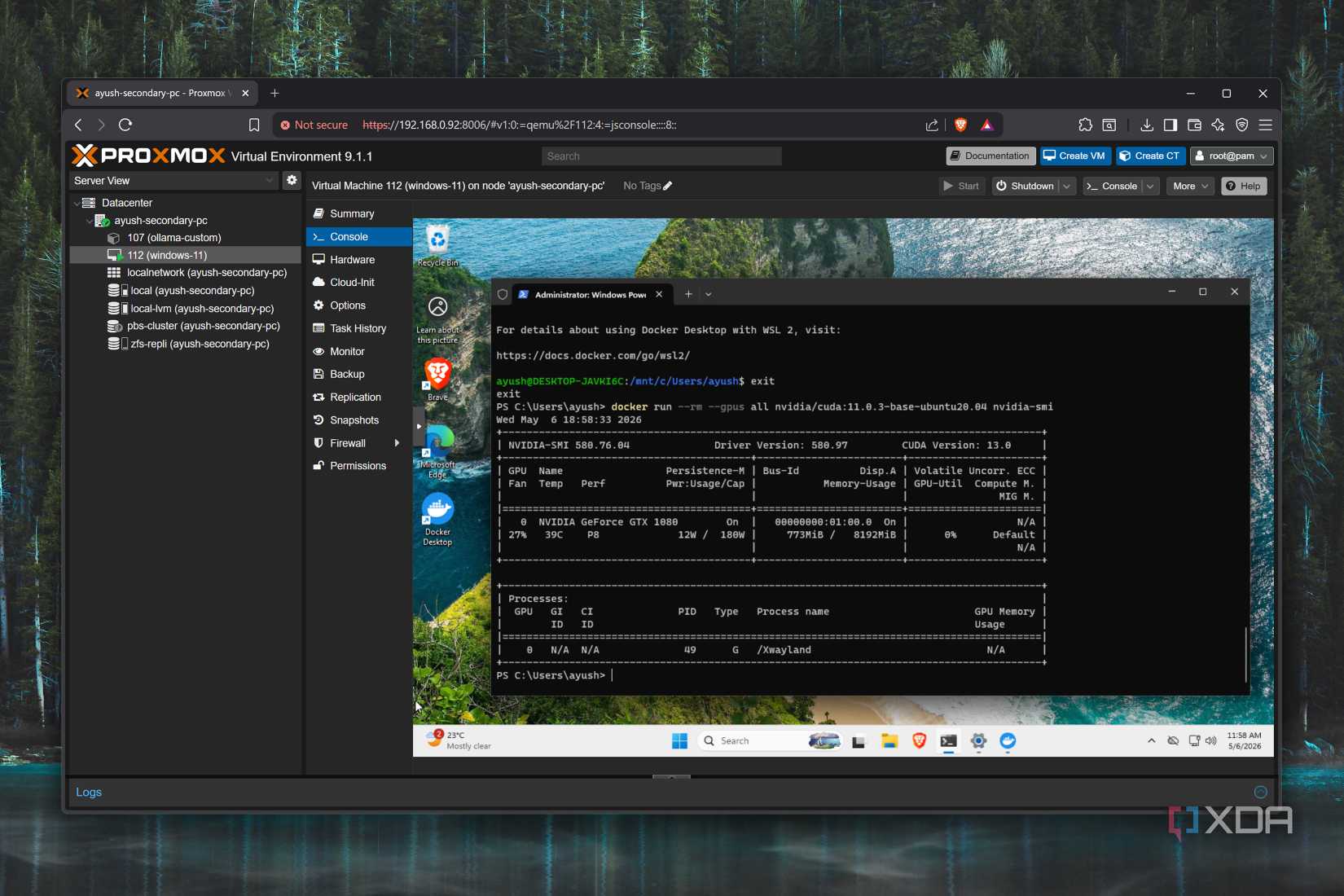

I primarily rely on nested virtualization to run Docker containers, and since they’re fairly lightweight, the Windows 11 virtual machine (and the Linux one created by WSL2 underneath) is fairly responsive even with different IDEs and agents open in the background. Likewise, WSL2 is just as useful when I want to train my PowerShell scripting and DevOps skills on a Windows environment. Is it weird? Definitely, but it complements my setup fairly well, especially once you throw GPU passthrough into the mix…

All it took was a little bit of tinkering and a whole lot of patience

Ever since I jumped down the LLM rabbit hole, I’ve started messing around with different models. Don’t get me wrong: I still use dedicated LXCs to host local models. But I occasionally need to work with AI-accelerated workloads on my dev VM. Since I’ve already enabled GPU passthrough long ago (which is a lot easier than you think), I can just pass them to containers normally. Again, there is some overhead because the graphics card passes through two layers of virtualization. But that doesn’t impede my Docker experiments too much. I also have some lightweight apps that require GPU acceleration in WSL2, and since I’ve already got a working graphics card on the parent VM, I can just as easily run them inside the nested Linux environment.

Switching from a somewhat practical setup to something that will require a lot of computation prowess, I also use nested virtualization to tinker with other hypervisors and home lab tools on my Proxmox workstation. ESXi, in particular, gave me a lot of trouble when I tried configuring it as a bare-metal environment. Besides a specific x86 SBC, ESXi refused to detect the built-in Ethernet adapters on every server rig in my arsenal, be it an aged gaming PC, shiny new NAS units, or my enterprise-tier (but ancient) Xeon system. Or even the NICs I’ve bought over the years.

Thanks to nested virtualization, ESXi worked right off the bat when I deployed it as a virtual machine on Proxmox. With enough CPU cores and memory, I even managed to get a few (mostly CLI) distros running as VMs inside my ESXi instance. It won’t win any awards on the performance front, but considering that my only other option was to unplug the SBC I use as my router or spend hundreds of dollars to grab an NIC that might be compatible with ESXi, I’d rather take the performance trade-off.

The same holds true for other home server distros as well. I’ve got some spare PCs that I occasionally arm with Proxmox alternatives whenever I hear about their new releases and need to revisit them for my articles. But if it’s just random home lab shenanigans, nested virtualization is a decent way to experiment with them without going through the trouble of configuring a bare-metal setup.

Proxmox is an open-source platform built on Debian Linux designed for server virtualization.