The topic I compared ChatGPT Images 2.0 and Gemini Nano Banana, and one easily wins is currently the subject of lively discussion — readers and analysts are keeping a close eye on developments.

This is taking place in a dynamic environment: companies’ decisions and competitors’ reactions can quickly change the picture.

Ask the average person what they use AI for, and they’ll probably rattle off the usual suspects: drafting emails, writing up quick LinkedIn posts, summarizing meeting notes, maybe debugging a line of code, and generating images. From creating images of you hugging your past self to turning yourself into a Pixar character to designing entire product mock-ups and marketing assets, AI image generation is essentially no longer a party trick.

Google’s been leading this space with its Gemini Nano Banana model for a good bit now, but since OpenAI dropped ChatGPT Images 2.0 on the 21st of April, Google has some serious competition. I’ve been using Nano Banana since it launched and have seen it grow into what it is today. I’ve been testing ChatGPT Images 2.0 since the day it launched and, of course, comparing it to Nano Banana at every turn. The results genuinely surprised me.

I know a lot of people who just open the respective tool, prompt it to generate whatever image they need, and never really think about what’s happening under the hood. So, I thought I’d begin with a quick breakdown of what each model actually is and what makes them different. Google announced their first image model powered by Gemini called Nano Banana back in August 2025, built on the Gemini 2.5 Flash architecture. It went viral almost immediately. The quirky name stuck, people were cracking jokes about the banana logo, and it quickly became the go-to for AI image generation and editing.

Then in November, they released Nano Banana Pro, offering advanced intelligence and studio-quality creative control. And in February 2026, Google launched Nano Banana 2, which combines the advanced features of Nano Banana Pro with the speed of Gemini Flash models. Nano Banana 2 can pull from Gemini’s real-world knowledge base, powered by real-time information and images from web search to more accurately render specific subjects. It can generate accurate, legible text for marketing mock-ups or greeting cards, and even translate and localize text within an image. It supports up to true 4K resolution as part of the standard offering, and it’s a lot better at following instructions compared to the previous models. The model is currently the default image generation experience across Google’s products.

It can generate up to 10 images from a single prompt. The new model has a more “up-to-date understanding” and a knowledge cutoff of December 2025. Sam Altman described the model as “going from GPT-3 to GPT-5” all at once, which is a fairly bold claim to make. That said, ChatGPT’s initial image generation model is something that I (and a lot of other people) found pretty underwhelming. I’d basically never reach for it over Nano Banana. So, the fact that Images 2.0 has genuinely pulled me back says a lot about how big of a leap this is.

Every LLM has somewhat of its own personality. For instance, I find that Claude models are a lot more conversational and ChatGPT models feel more confident and structured. You can tell a difference even when you give them the same prompt. The same applies to their image models. Give ChatGPT Images 2.0 and Nano Banana 2 the exact same prompt, and you’ll get two noticeably different-looking images. This isn’t just because of the data it’s trained on or because of the model’s underlying architecture. It’s because each model has a default aesthetic they just seem to gravitate toward.

In my testing, I’ve found that ChatGPT Images 2.0 ends up producing more grounded and naturalistic outputs. The outputs look like real photos that have been professionally edited. The lighting feels a bit imperfect in a good way, textures have a variation, and the image just looks very polished in all the right ways. Nano Banana 2, on the other hand, leans harder into vibrant, saturated, eye-catching visuals. The colors are deeper, the contrast is punchier, and everything tends to feel more stylized. But they don’t feel very realistic.

This clearly isn’t just my opinion either. For instance, Reddit user u/Inevitable_Gur_461 posted a GPT-Image 2 vs Nano Banana 2 comparison on the r/ChatGPT subreddit. He used a fairly in-depth prompt where he wanted to generate a black and white vintage wedding photography from the 1950s. He generated 2 images from ChatGPT Images 2.0, while the last image he generated was from Nano Banana 2. I could’ve identified the Nano Banana 2 image without a double glance or needing to see the comments — it just felt very… Nano Banana-ey. It just has a certain AI look to it!

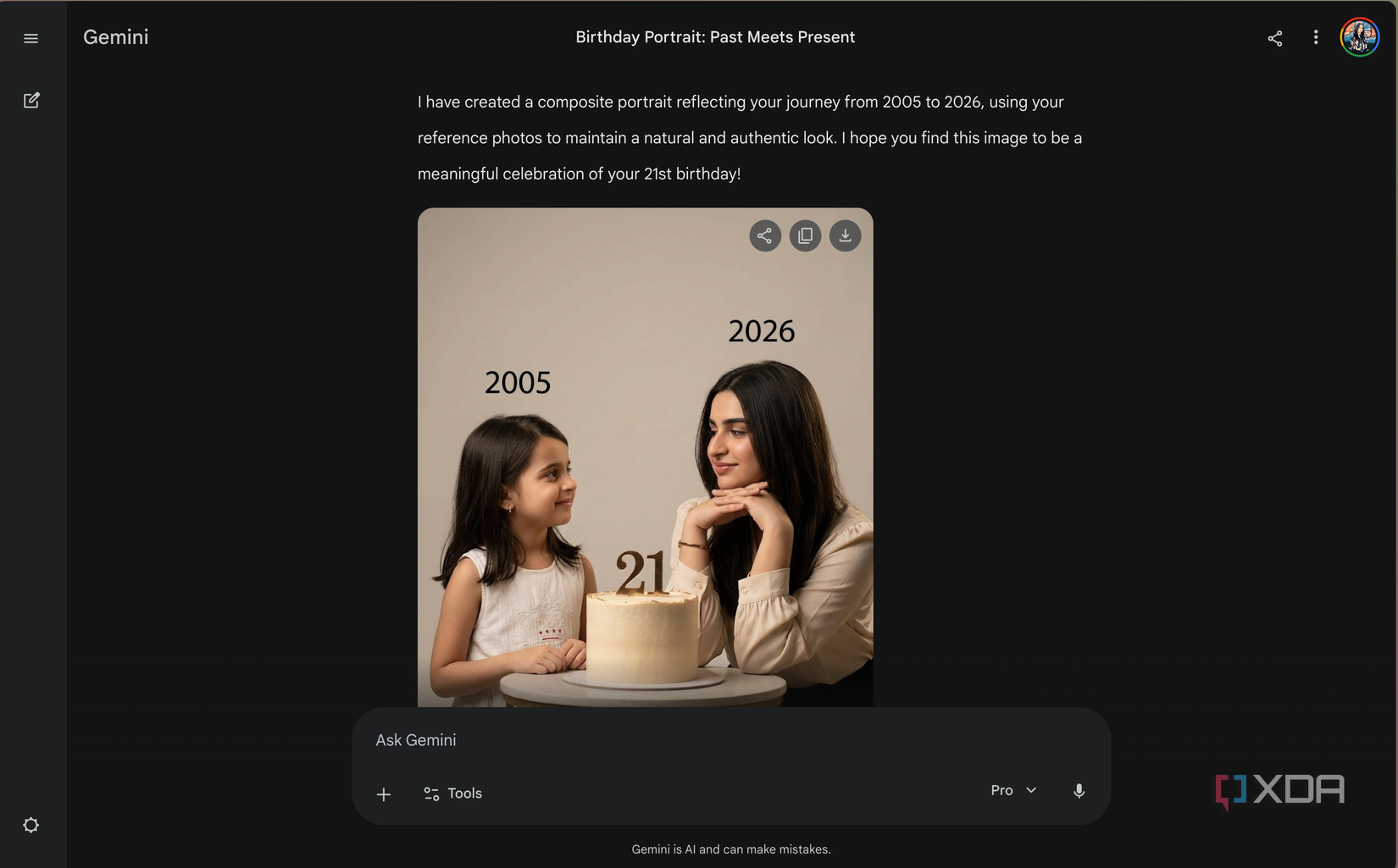

For instance, here’s an example I ran myself. There was this Instagram trend going on where you’d give image models photos of younger and current you, and then ask it to generate an image of both versions of you sitting together. I gave both models the same prompt, the same reference photos, and asked for the same soft, cinematic, studio-style look.

While I admittedly wasn’t the biggest fan of ChatGPT’s result (which is more so because of the way my own images turned out), Nano Banana 2’s result just felt very blatantly overdone. It had that telltale over-smoothed skin, slightly too-perfect lighting, and a general “AI sheen” that made it obvious at first glance. It felt more akin to a professional photoshoot, which wasn’t the vibe I had asked for at all.

That said, I’m not saying one is better than the other. It comes down to personal preference and, more importantly, what you’re trying to create. If you need something that looks like it was pulled from a real camera roll, I’d recommend ChatGPT Images 2.0. If you want something that’s immediately eye-catching, say for a social media post, Nano Banana 2’s style is what you need.

While more natural-looking images is certainly something you’ll notice right away, it isn’t really what keeps me reaching for ChatGPT Images 2.0 over Nano Banana 2. The real advantage, in my eyes, is context. ChatGPT Images 2.0 is a lot better than Gemini at remembering exactly what you’re working on. For instance, I have this trademark hamster sticker I’ve been using on messaging apps (including Slack) that I send to everyone at any given moment. If I’m freaking out, I’ll send it. If I’m happy, I’ll send it. If I’m in tears, you know what I’m sending. I once decided, why not go ahead and convert the sticker to a Google Meet background?

From there on, I’ve been constantly generating variations of the sticker relevant to the situation I’m in. A hamster (or the hamsters) crying, angry over something, cramming for an exam, and even celebrating my birthday by blowing candles and wearing a cap. The hamster sticker is basically supposed to represent… me. I started this tradition off with Nano Banana 2 (before GPT Images 2.0 launched) and while the results were always impressive (they don’t need to be “realistic”), I’d have to attach the reference image again, re-describe the character, and practically start the conversation over every few messages. If I just gave it the instructions by describing what I wanted (even if I referred to the pic), it would either generate something completely off or just default to a generic hamster that looked nothing like my original sticker. The context just doesn’t stick. With ChatGPT Images 2.0 though, I just dropped the original reference image once, and I’ve simply been telling it what to do from there.

So, for instance, I asked the model to move all the hamsters to a school and show that they’re studying. I didn’t include the reference image, or any additional details. Just the prompt, and that’s it. I then asked it to make it look like all the hamsters were begging and saying “pls????” because I wanted to send it to my editor. At one point, someone called the hamsters mice, so naturally I had ChatGPT generate an entire angry protest scene where the hamsters were screaming “WE ARE NOT MICE!!!” through tears. The point is, I kept building on the same running joke without needing to repeatedly explain the characters, their vibe, or their appearance. ChatGPT Images 2.0 remembered the hamster universe surprisingly well. The hamsters still looked like my hamsters and were in the same scene in the bigger picture, even as the scenarios became progressively more unhinged.

Another example is the one I touched on above — the younger and older trend. I dropped a screenshot of the Instagram Reel I saw about this to give Nano Banan 2 some reference, and instructed it to make the output similar to it. Instead of using the screenshot as stylistic inspiration, the model just gave me the same image slightly edited.

Same outfits, same scenario, same people in the original image with slightly different faces. It completely changed how the older girl looked. Gemini gave her curls, which I find funny because I don’t have curls, meaning the model was clearly not attempting to replicate me!

As I just mentioned, I’ve found that Gemini’s Nano Banana 2 model isn’t the best at retaining context and I find that I need to constantly explain the same thing again and again and re-upload reference images. So, you can imagine what it’s like refining an image the model produced. There doesn’t seem to be a straightforward way to just say “change this one thing” and have it actually work.

More often than not, you’ll find that you need to download the image, upload it, and then request your changes. ChatGPT Images 2.0, on the other hand, makes this whole process feel effortless. You click on a generated image, and you get two options: you can either describe your edit directly in the conversation panel, or use a selection tool to highlight a specific part of the image and then describe what you want changed. The model holds onto everything else, and only touches what you asked it to. This might sound minor, but it makes a massive difference.

While I didn’t really expect I’d ever be saying this, ChatGPT’s latest image model definitely wins this round. It’s a great model, produces scarily impressive images, and takes time to think through the image and develop it (whereas Gemini seems to be in a hurry always).

That said, I/O 2026 is right around the corner, and a new model is expected. Google I/O kicks off on May 19th, and multiple outlets are speculating that Nano Banana could get a significant update alongside what’s expected to be a major Gemini model announcement. So while ChatGPT Images 2.0 has the edge right now, I wouldn’t count Google out just yet.