The topic I used Claude Code to help me visualize my PC benchmarks, and it changed my workflow… is currently the subject of lively discussion — readers and analysts are keeping a close eye on developments.

This is taking place in a dynamic environment: companies’ decisions and competitors’ reactions can quickly change the picture.

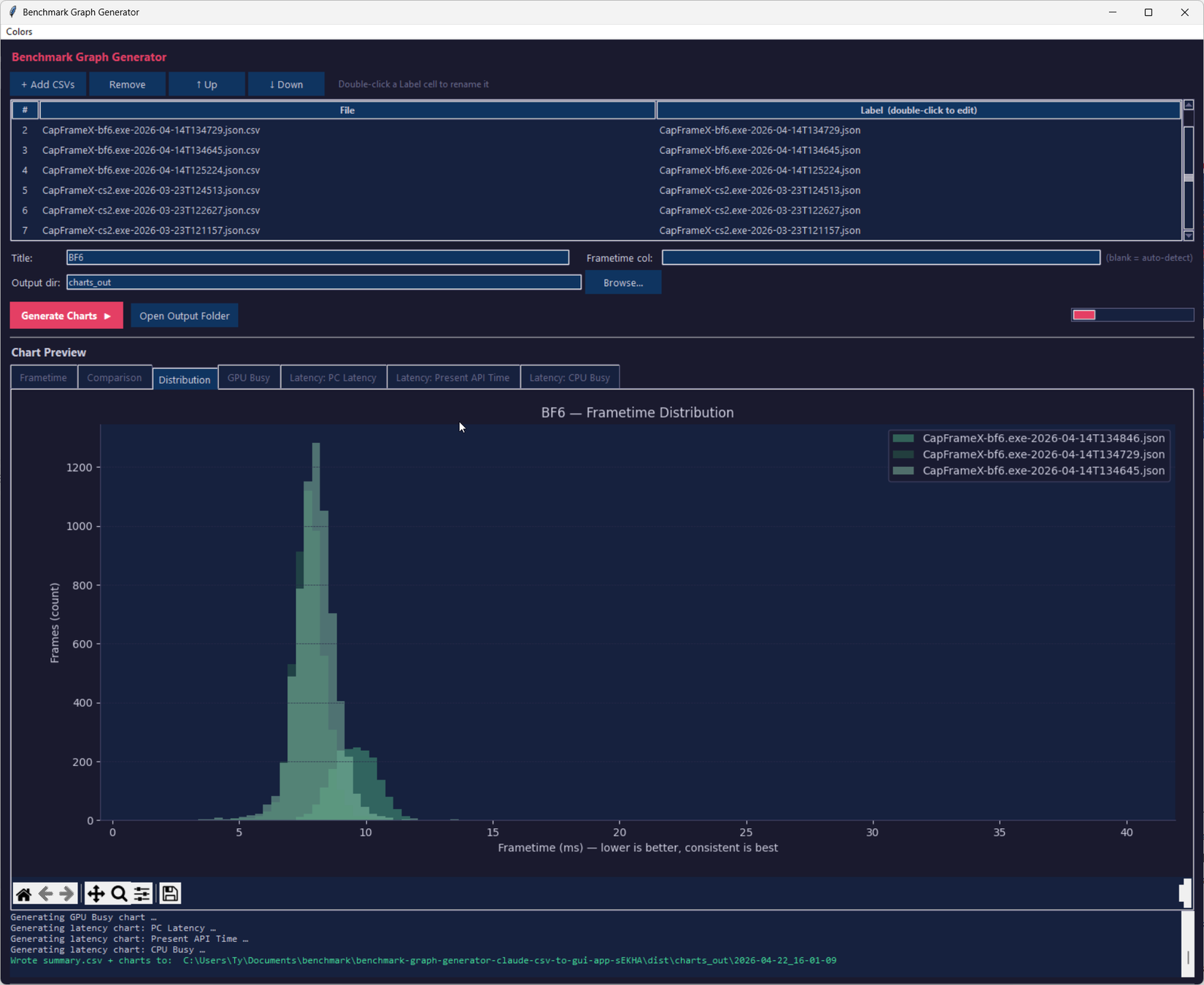

As someone who is equal parts gamer and tinkerer, I spend a lot of time looking at performance metrics. Average FPS is just the tip of the iceberg for me. I’m interested in seeing frametime, latency graphs, and other metrics like GPU Busy compared on a run-to-run basis. Visualizing these metrics for personal use is easy: applications like CapFrameX do this for you, but I was looking for a way to produce slick graphs for publication here on XDA and elsewhere, and so, like most people who have rudimentary knowledge of coding, I turned to Claude Code to assist with making an application that could do just that. It succeeded in accomplishing that task far beyond my expectations, and there’s still so much I can improve on.

CapFrameX and PresentMon both do their jobs well, but their visualization outputs are designed for the person running the test to do a quick check of the data, not for a reader who’s a few pages into an article. Publication-grade charts need consistent styling, clean typography, matching color palettes across pieces, so an article feels cohesive, and a format that looks intentional rather than screenshotted from a default view.

My requirements are simple: I want something that will take a CSV file from either of those applications, and output nice looking graphs with appropriate scaling and colors that I can edit at any time. I’m not a developer, but I know just enough Python to get me into trouble.

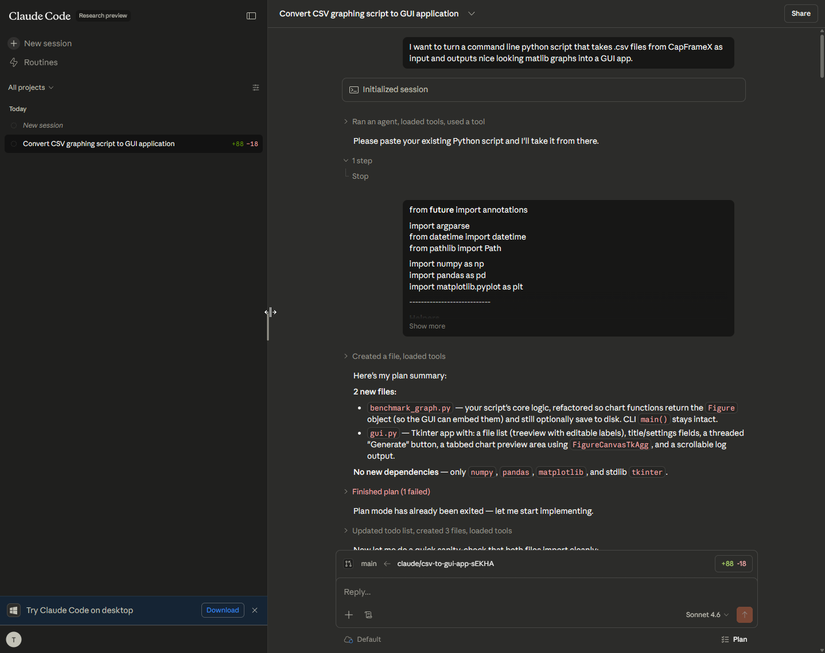

The original script came out of ChatGPT in an attempt to see if what I was asking was even reasonable in the first place. The script produced was a bit shoddy, and didn’t exactly work the way I wanted, but it did output graphs in a .png format. I put the project on the backburner for a couple of months, and when I handed it off to Claude Code for a sanity check later, it cleaned up the CLI arguments well enough to give me confidence in asking it to make a GUI wrapper.

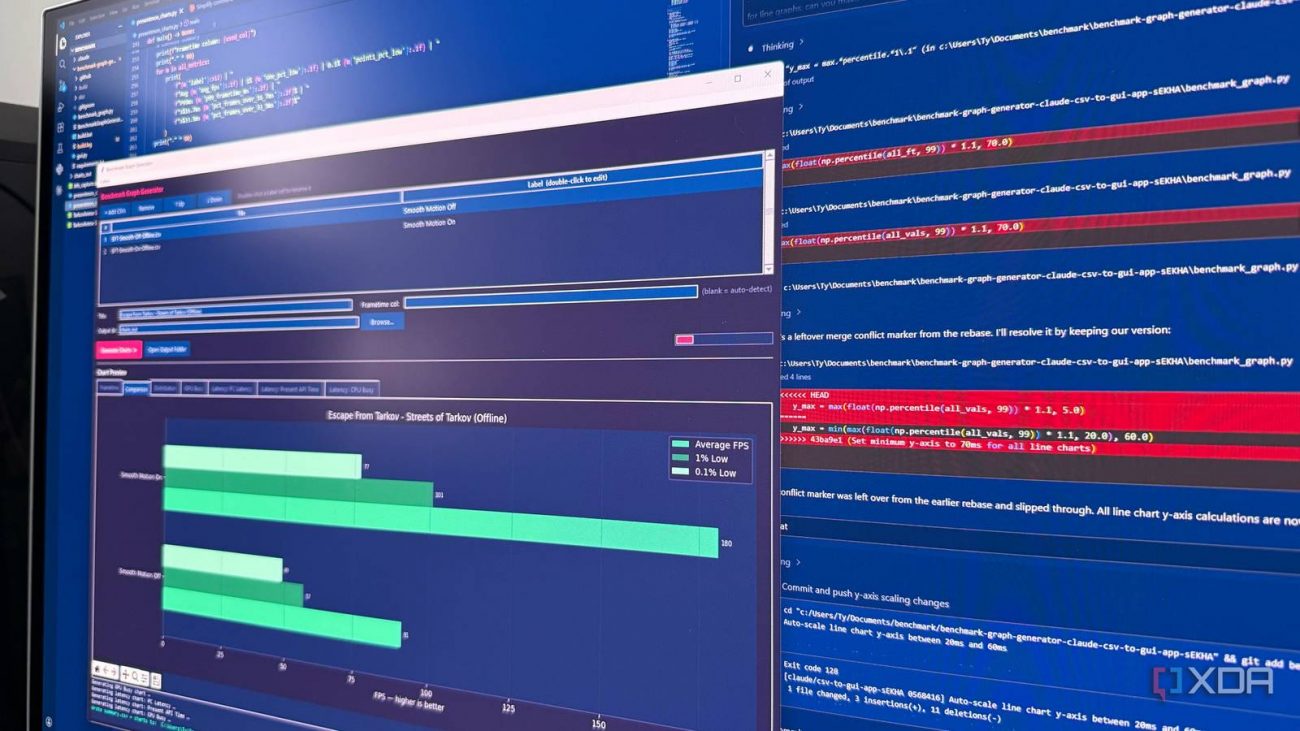

The architecture ended up split cleanly in two. The CLI tool, benchmark_graph.py, still works standalone, so if I prefer running charts from a terminal for some reason, nothing’s changed. The GUI, gui.py, is a wrapper that imports from it.

I didn’t even specify exactly how I wanted the GUI to be set up, I simply asked for it, and Claude delivered it. There were some growing pains in getting the build process to work—I wanted to be able to click an .exe and have the GUI open instead of running it from the command-line, which is very picky of me, but I was also just curious to see if it was even possible for Claude to do in the first place. After sorting out some bugs in getting the script to execute correctly, I finally had a working GUI app that did exactly what I wanted.

It worked perfectly. I could add my CSV files and press “Generate Charts”, but more interestingly, it added a bunch of QoL stuff that I didn’t ask for that wasn’t in the original script, at least, not with CLI arguments I was aware of. It gave me the ability to label the CSVs without having to rename the files themselves, and it added a dedicated section for chart previews that populates right after running the script, two very useful things I wouldn’t have asked for. I did ask it to add a way to change the color of the charts using a color picker, and it was successful, though I think more tweaks are needed to enable the ability to change the background color. Output folders are also timestamped automatically and include a summary CSV alongside the chart files, making quick looks simple.

I want to make this abundantly clear for those who choose to peruse the GitHub repo: I am not a software developer. I can read the code well enough to follow what it’s doing, and can write some basic intermediate-level programs in a couple of different languages, but I’m by no means an expert. I can’t tell you whether it’s constructed sensibly, whether there are subtle bugs waiting for edge cases I haven’t hit, or whether a real engineer would look at it and wince. Nothing has been code-reviewed, and there have been no tests besides me running my CSV files through it.

And this is the truth about vibe-coded apps: code produced through conversational prompting tends to work for the exact scenarios you tested and break quietly outside them. If CapFrameX changes its CSV schema in a future update, or a matplotlib dependency deprecates something the tool relies on, I’m not going to have my nose buried in Visual Studio, I’m running back to the Claude Code chat window to figure out what broke.

There are alternative ways to produce slick-looking charts from CSV files, but I wanted to do it in one very specific way, and it took sending a total of 17(!) chat messages to Claude to go from CLI script to function GUI that I actually use in my day-to-day work. If I had tried to code this on my own, we’d be nearing monkey-typewriter territory.

Infinite monkey theorem aside, this proved to me that Claude Code is genuinely useful not just as a tool, but it’s useful for creating my own tools. I could spend tokens on pasting the CSVs to Claude every time I want a chart, but having my own tool encased in its own executable that I can pin to my taskbar is awesome, and it slotted into my workflow perfectly.

Because of how much time I spend looking at performance metrics, being able to share them in a way that doesn’t look sloppy is important to me, and this tool instantly became a huge part of my daily workflow. There are things that I could definitely improve about it, and it’s almost certainly got some issues under the hood that an experienced dev would scoff at, but it does exactly what I need it to do, and that’s good enough for me.