The topic I blamed my RTX 4090 for poor performance, but this was the real problem is currently the subject of lively discussion — readers and analysts are keeping a close eye on developments.

This is taking place in a dynamic environment: companies’ decisions and competitors’ reactions can quickly change the picture.

When I replaced my RTX 3090 with a 4090, I expected my frame rates to improve significantly across the board, especially since various benchmarks suggested it was up to 70% faster at 4K and up to 50% faster at 1440p. That kind of jump should’ve made my RTX 4090 perfect for both AAA and competitive gaming. But that’s not what I experienced. I wasn’t getting anywhere near the numbers I saw on benchmarks. Sure, games did run better, but just not to the extent early reviews made it seem.

At first, I wondered whether my GPU was power-limited, but soon enough, I realized it was my aging CPU that was holding things back. My Ryzen 9 5900X, which was barely two years old at that point, was suddenly not good enough to keep up with my new GPU. To be fair, it didn’t seem like a big deal that I didn’t have the fastest and latest CPU available. But the more I looked at my GPU usage while gaming, the harder it became to ignore the problem.

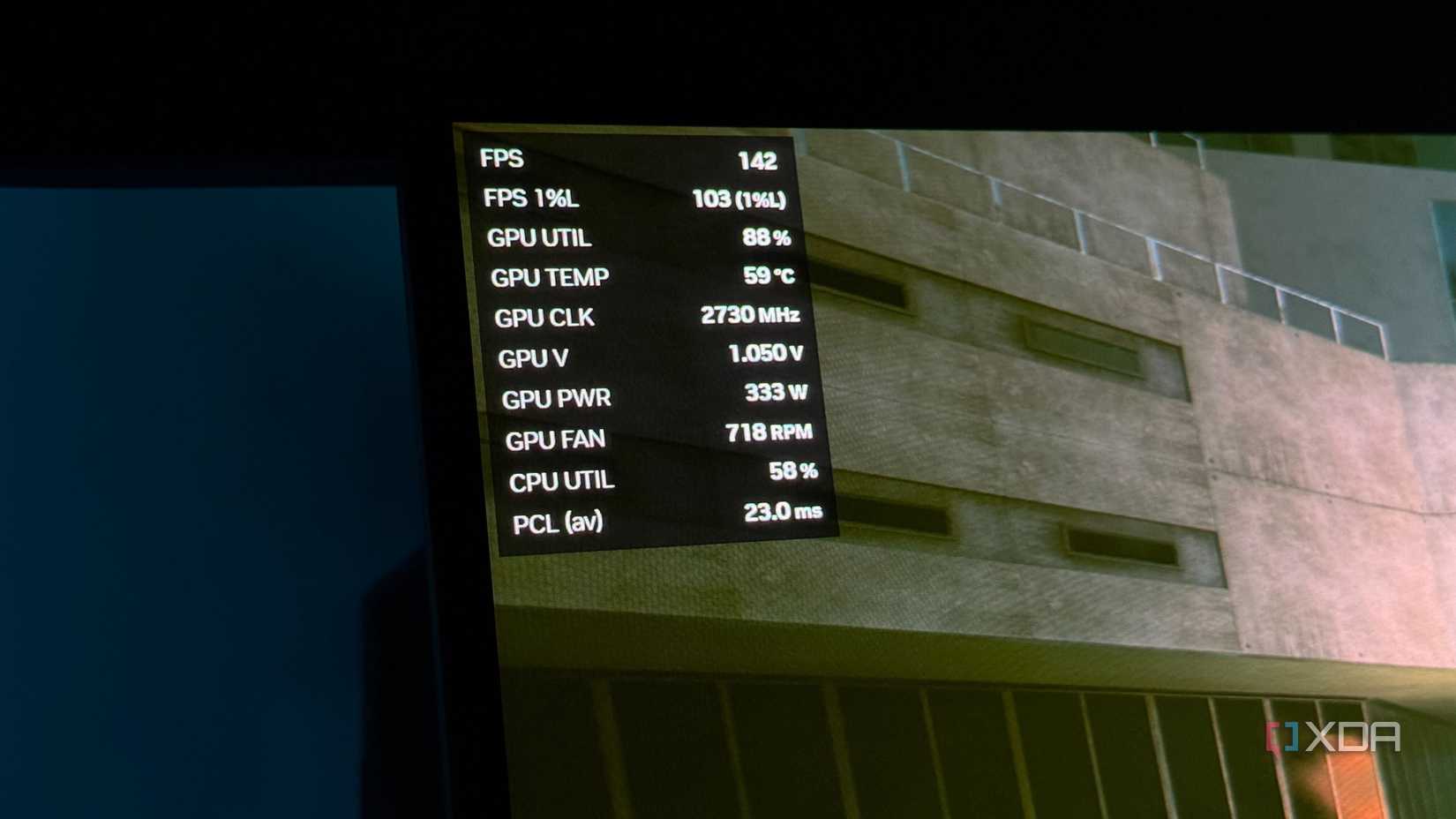

When my frame rates didn’t line up with what I had seen in benchmarks, I immediately launched MSI Afterburner and started monitoring everything in real time. That’s when things started to make sense. My GPU usage wasn’t anywhere near where it should’ve been to get the performance I expected. Instead of sitting close to 99%, it would hover around 70% to 85% most of the time, depending on whether I’m gaming at 1440p or 4K. That’s not how a GPU like this is supposed to behave in demanding scenarios.

The more I tried different games, the more consistent this behavior became. Sure, in AAA titles like Cyberpunk 2077 and Assassin’s Creed: Shadows, the GPU usage would get close to 90%, but in competitive titles like Valorant and Apex Legends, it almost never exceeded 80% even at 4K. At 1440p, the results were worse, with the usage staying around 70% despite all the graphics settings maxed out. At that point, it was clear my 4090 wasn’t the limiting factor. It was just waiting on my aging CPU to keep up.

At first, I kept focusing on average FPS because that’s what most benchmarks highlight. Even though my gains weren’t as impressive, I was still expecting games to feel smoother overall from the FPS uplift alone. But that’s not what I got. Instead, I noticed small stutters and subtle jitters during fast camera pans that didn’t line up with what the FPS counter was telling me. That’s not something you expect after upgrading to a flagship card like the RTX 4090, but when you pair it with an aging CPU like I did, this is what you end up dealing with.

That’s when I stopped relying on the FPS counter and started monitoring frametimes instead. I was seeing spikes and fluctuations that matched exactly what I was feeling in-game. And the worst part? I lowered graphics settings, hoping it would smooth things out, but if anything, it made the problem more obvious. The GPU had even more headroom, but the inconsistency didn’t go away, which made it clear the bottleneck was my CPU all along. The only temporary solution was to crank up GPU-bound settings while lowering CPU-intensive settings like draw distance and crowd density to reduce the bottleneck.

To be fair, my Ryzen 9 5900X wasn’t really an outdated CPU back when the RTX 4090 launched. In fact, it would’ve still been enough if I had stuck to playing AAA games at native 4K with maxed-out settings. At that resolution, the GPU is still doing most of the heavy lifting anyway, so you don’t really need the fastest CPU on the market. Sure, the GPU usage would’ve been less than optimal, but I’d still be getting most of the performance I paid for without having to splurge on an AM5 upgrade.

The thing is, I rarely play AAA games. Most of the time, I’m playing fast-paced shooters and competitive titles like Battlefield 6, Call of Duty: Warzone, and Valorant, where pushing higher frame rates matters a lot more than visual fidelity. That’s exactly where the 5900X shows its age. In these titles, the CPU can still become the limiting factor even at 4K, especially once you lower the settings to chase higher frame rates. That’s exactly why I decided to upgrade to a used 5800X3D the moment I found it. Despite having fewer cores, my GPU usage improved significantly, and performance finally matched what I saw in early benchmarks.

If there’s anything I learned from my experience, it’s that you can’t overlook your CPU when you’re eyeing a high-end GPU like the RTX 4090 or 5090. I went into this upgrade thinking my 4090 would absolutely crush any game I threw at it, but in reality, it just showed that my 5900X simply wasn’t good enough for it. So, unless you’re planning to exclusively play GPU-intensive AAA titles at native 4K, make sure to allocate enough of your budget toward a newer CPU that can actually keep up. Otherwise, you’ll end up in the same situation I did, where your GPU has plenty of headroom but never gets fully utilized to deliver the performance you paid for.

You can’t cut corners if you’re spending over $1,000 on a GPU.