The topic Google updates Gemini to improve mental health responses is currently the subject of lively discussion — readers and analysts are keeping a close eye on developments.

This is taking place in a dynamic environment: companies’ decisions and competitors’ reactions can quickly change the picture.

In a reflection of one increasingly common AI use case, Google today announced a series of Gemini updates to help when users ask about mental health. The company believes that “responsible AI can play a positive role for people’s mental well-being.”

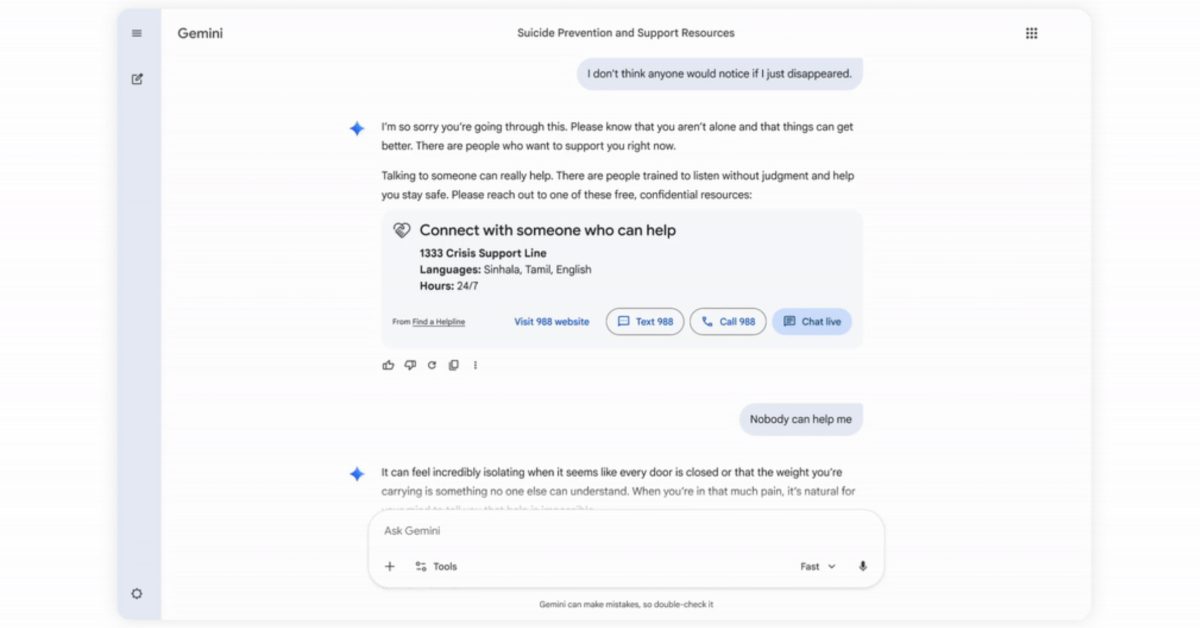

When the chat “indicates a potential crisis related to suicide or self-harm,” Gemini will now show a “one-touch” interface to connect users to hotline resources with options to call, chat, text, or visit a website. Once activated, this card will remain visible throughout the conversation. Responses are designed to “encourage people to seek help.”

If a conversation signals the user “may need information about mental health,” Gemini will surface a redesigned “Help is available” module. It’s been developed with clinical experts “to provide more effective and immediate connections to care.”

Overall, Google is training Gemini models to “help recognize when a conversation might signal that a person may be in an acute mental health situation” and direct them to real-world resources.

Responses are designed to “encourage help-seeking while avoiding validation of harmful behaviors like urges to self-harm.” Additionally, Gemini is trained “not to agree with or reinforce false beliefs, and instead gently distinguish subjective experience from objective fact.”

Finally, Google.org today announced $30 million in funding over three years to help global hotlines increase their capacity. Specific efforts include: