The topic I switched from ChatGPT Plus to Claude Pro, and I haven’t regretted my decision is currently the subject of lively discussion — readers and analysts are keeping a close eye on developments.

This is taking place in a dynamic environment: companies’ decisions and competitors’ reactions can quickly change the picture.

When choosing which LLM to use, it was quite simple at the beginning. ChatGPT was the clear and obvious front-runner for general use when I began using one daily, so when it became time to purchase a subscription, ChatGPT Plus was what I went with. It had my conversation history, non-identifying logs from my home lab, and I was familiar with how to prompt it to get what I wanted. I would sometimes put the same prompt into Anthropic’s Claude, just to see what it would say, but besides that, my AI usage has been largely limited to local LLMs and ChatGPT. That is, until now.

Anthropic has been pretty difficult to ignore of late. In just the month of March alone, they added memory capability to the free plan, released support for Excel and PowerPoint, added the ability to assign tasks to your computer from your phone, and added the ability for Claude Code to open your apps, click through UI, and test builds on its own. That’s really just scratching the surface. I’m not a developer, and though I’ve historically used the LLM component of AI primarily, switching to Claude Pro has really opened my eyes to what this technologies is actually capable of beyond that.

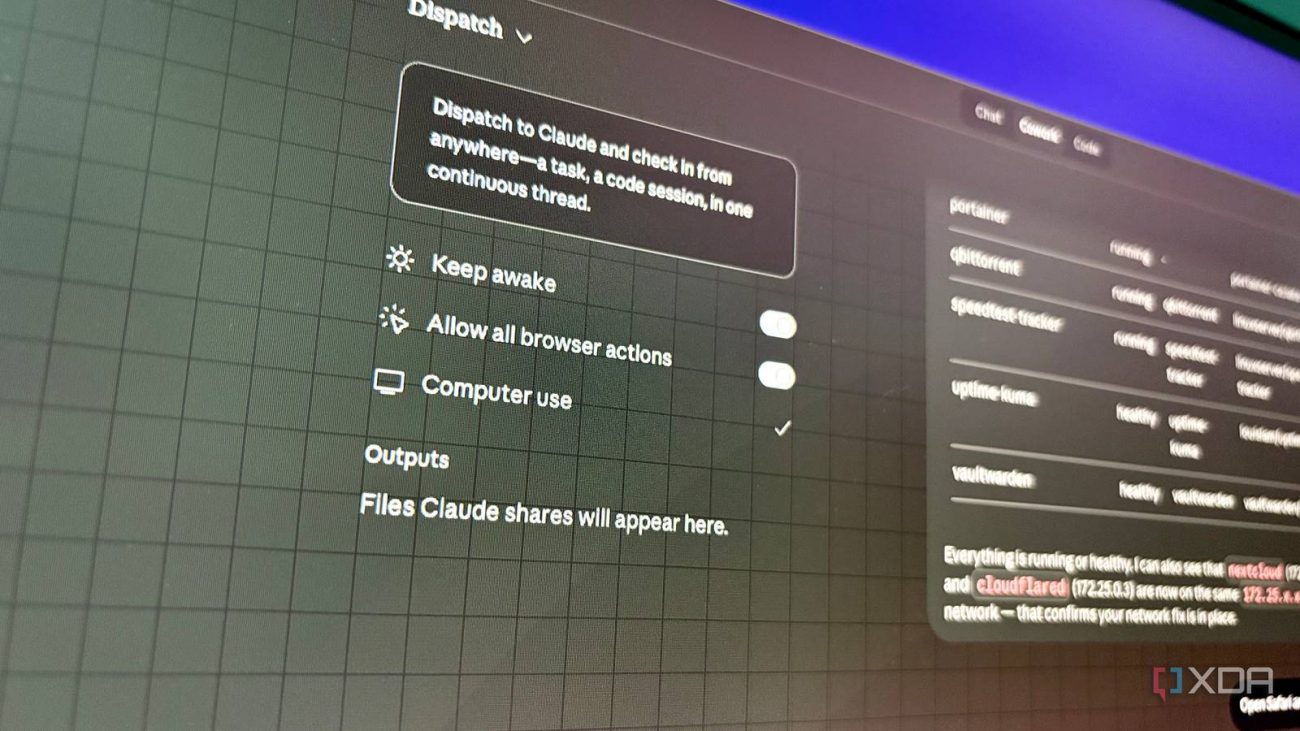

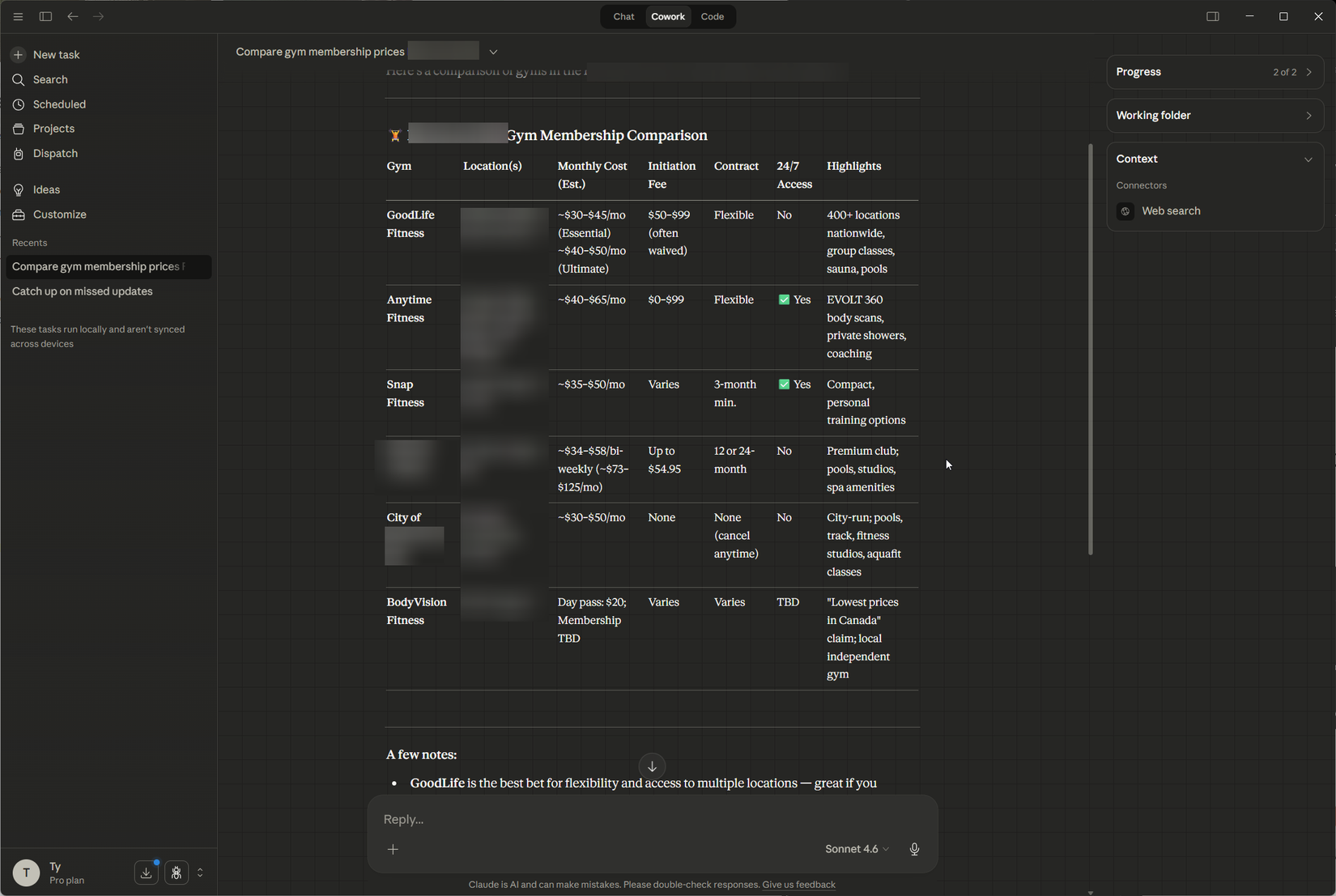

The stuff around the chat function is where Claude is really able to show off. Cowork is an excellent example of that. After connecting it with Slack, Google, and my calendar, it’s able to catch me up on everything I missed with a single click. These apps all have their own version of an “AI overview” that does a similar thing, but instead of going and looking at each thing individually, I can click one button and then go make my morning coffee, and by the time I come back, Claude has shown me everything I’ve missed. It can perform much more complex tasks, like analyzing data for you or organizing files on your system, depending on how much you trust it, of course.

Before I speak about anything relating to programming, it must be known that my coding ability is quite rudimentary. I learned HTML, CSS, and Python in school, so I have a basic understanding of the principles, but I am by no means an expert. This might land me straight in the “danger zone” in terms of software development, in other words, I know just enough to get myself into trouble.

I previously attempted to vibe-code a Python command-line script that would take CapFrameX .csv files as input and output a nice looking benchmark graph—a simple task that it should be able to handle. ChatGPT was fairly helpful in getting my initial scribblings into a somewhat functional script, but I had trouble with getting arguments to be properly passed to the functions. It also seemed to have a tendency to take the path of least resistance; if I asked it to add something to the script, it would quite literally tack it on instead of making a change that a real developer would.

I started a Claude Code session in VS Code and asked it to review the script for errors and any opportunities to simplify. I added that the command-line arguments are difficult to use, and it dropped arguments relating to per-run frametime outputs and distribution graphs, and instead opted to always generate both when invoking the script. This was a useful change, and the newly generated script with the changes worked on the first try. I then asked it to modify the script to create a folder with each new run, and modify the colors of the chart output to be in XDA’s color scheme. The result was clean looking performance charts that are almost publication ready.

These extra bells and whistles do come at a steep usage cost, and Anthropic’s usage limits are extremely thin, even for Pro subcribers. Within an hour of testing a couple of Cowork capabilities and fixing my chart-generating script, I had hit 70% usage. One more Cowork task and I ended up at 100% for my current session, which felt a bit insane. For the $31 CAD I paid for one month, running into usage limits within an hour felt kind of ridiculous, whereas when I used ChatGPT Plus, I never ran into any limits, and it was also cheaper, coming in at just $25 CAD a month. OpenAI doesn’t specify specific usage limits with Plus, just the ability to “have long chats over multiple sessions.”

Despite the higher price and strict usage limits, Claude Pro felt much more integrated with the workflows I trusted it with, while ChatGPT felt like it was a tool I went to when I needed an LLM. I don’t regret my decision to switch over, at least in the interim, as more of my LLM usage can be seemingly relegated to a free plan. The agentic tools that Claude has, along with its coding prowess, proved to me that it’s worth toughing out the limits, at least until something else catches up.