The topic I gave Claude Code a kid’s activity book to solve — here’s where it fell… is currently the subject of lively discussion — readers and analysts are keeping a close eye on developments.

This is taking place in a dynamic environment: companies’ decisions and competitors’ reactions can quickly change the picture.

A little while ago, I let Claude Code automate things on my desktop, and I was thoroughly impressed by what I saw. However, after posting my findings, people in the comments were quick to point out that the tasks I had it perform were actually very easy jobs for Claude Code to do. This surprised me, as I come from a time when AI couldn’t even beat a human at Go. Now, I can tell Claude to take control of World of Warcraft, log into a specific character, and let me know when I’m in-game, and that’s apparently unimpressive.

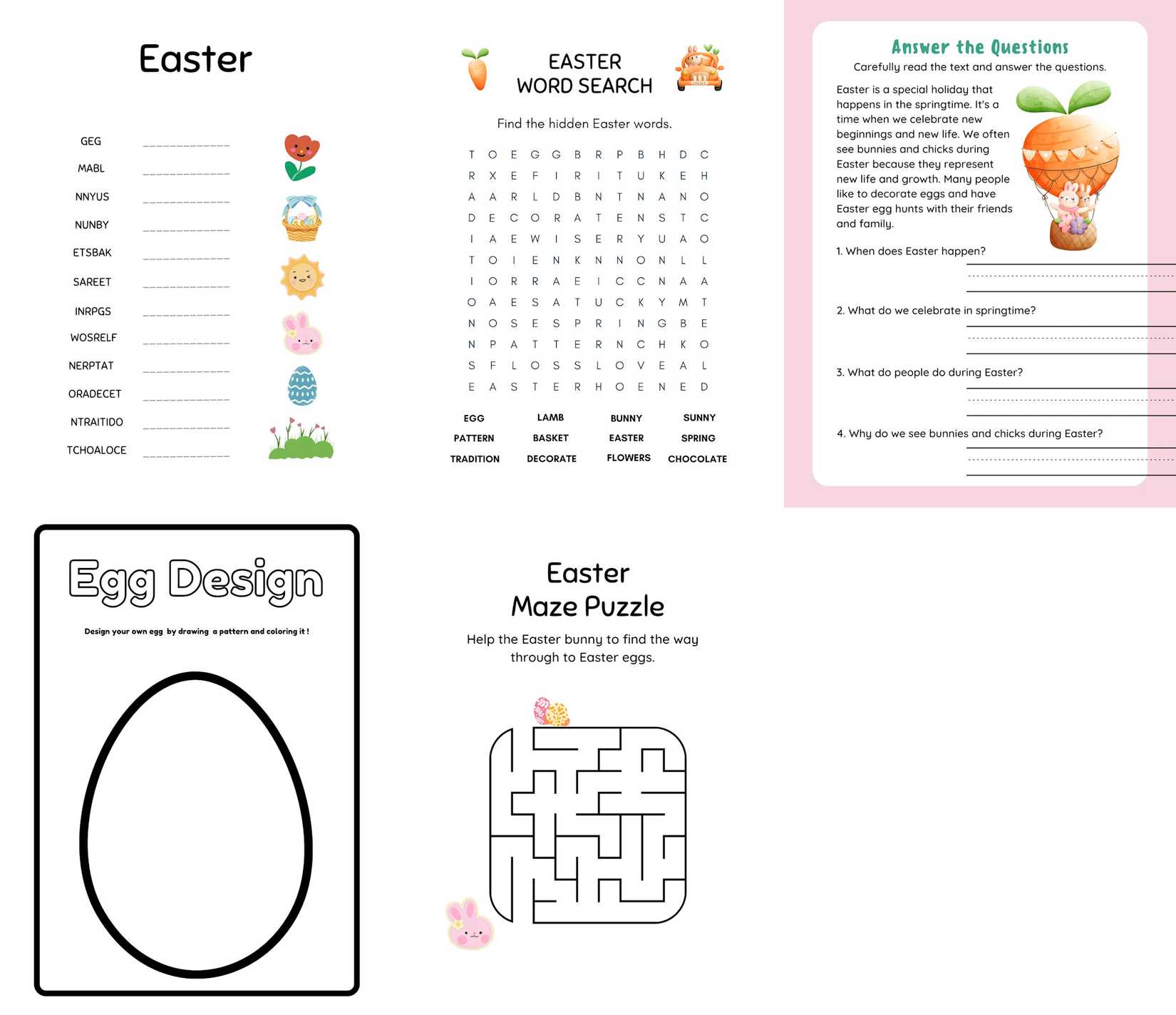

But the comments also gave me a wicked idea. If asking Claude Code to perform simple desktop actions was child’s play, then what happens if we literally gave Claude Code ‘child’s play’ to solve? And thus, I challenged Claude Code to solve five Easter worksheets for kids to see if it had the chops.

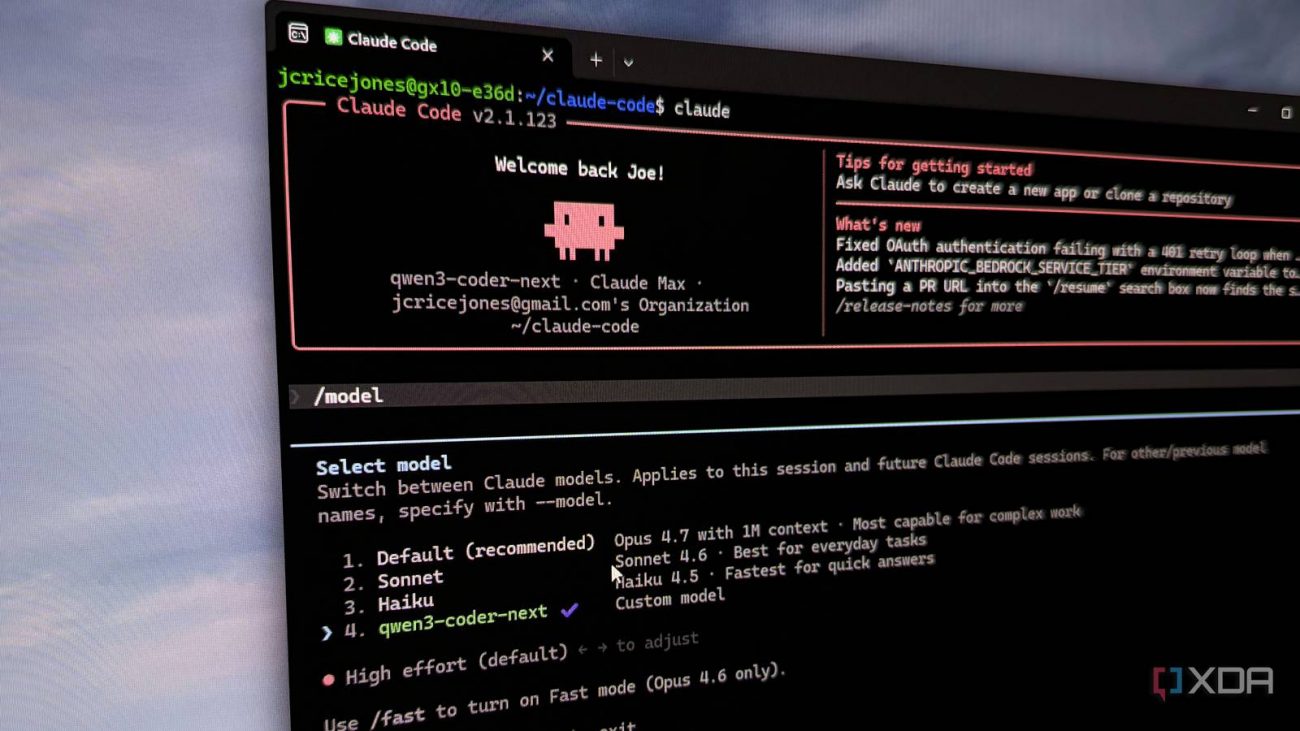

First, let’s lay out the challenge Claude Code will be performing. I will set Claude Code to Sonnet 4.6, with Medium reasoning. I will then direct it to a folder called ‘The Trials’. In the folder are five activity sheets, which came from the “Kids Activity Book Mega Bundle” from PixoraPrintsCrafts on Etsy.

Here’s what I believe will happen, starting from the task I believe Claude will find easiest and ending with the one I think it will find the hardest:

For this task, Claude quickly read the text, saw the questions, and answered them in the chat log with zero problems, as expected. However, getting the words positioned on the lines proved very difficult. Claude accidentally wrote them misaligned to the actual lines, so a lot of the time and tokens were spent solving the problems, and more time was spent trying to get the words in the right place and erasing the errors.

The main issue was that Claude had to write a Python script to add the words. Claude had to calculate the coordinates for each word, then run a script that pastes all its answers in their designated spots. If something was wrong with the coordinates it picked, the entire thing would look awful. Still, it managed to get the alignment down after a few tries.

Claude Code became far more useful once I stopped treating it like a code generator and started using it to understand projects and terminal chaos.

The Easter Word Scramble was a similar story to the questions page. Claude blitzed through the unscrambling, wrote the Python code to insert the text…then accidentally wrote the answers on top of the scrambled words. Claude looked in the Recycle Bin for a backup, and when it couldn’t find one, it then reconstructed it manually. It’s at this point that I realised I should actually have a backup and let Claude know about it in case it made errors.

I wasn’t sure how it was going to perform the word search. I was pretty confident Claude had learned from the last two activities about how to properly calibrate its drawing tools and draw straight lines on the word search. However, I wasn’t sure how it was going to find the words in the first place.

To get the job done, Claude cropped out everything but the grid so it could better see the letters. Then it wrote some code to automatically locate and solve the words. It involved putting every line into an array, then performing a for loop that treated the array as if it were a grid, checking the surrounding letters for each word. You can see the code it wrote in this Pastebin link. It was a very cool solution, and I was very impressed with Claude’s capabilities. I kind of wish I had given it a puzzle with diagonals to solve, too.

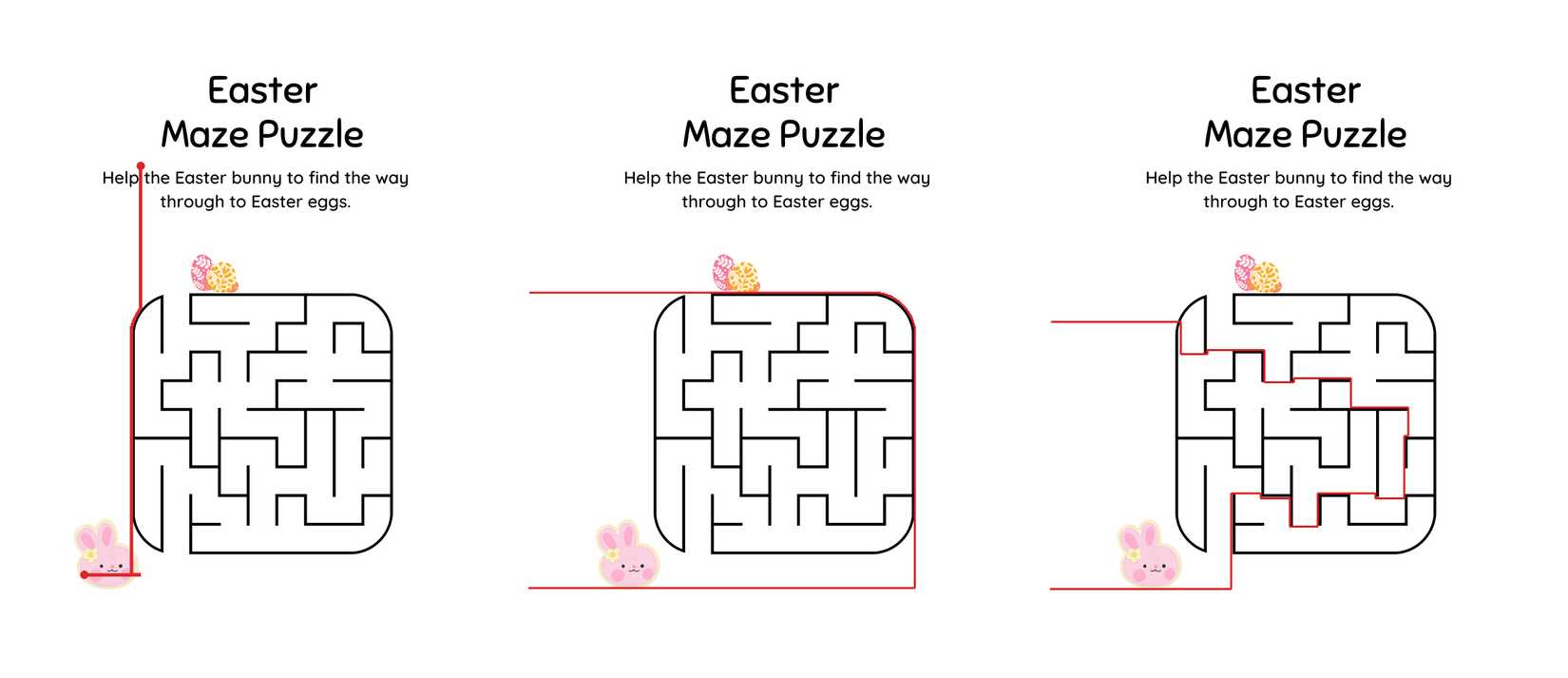

The maze is where things fell apart. I gave Claude instructions to go from the rabbit to the eggs through the maze, so that it wouldn’t try going around the outside. So, Claude got to work by coding a Breadth-First Search (BFS) tool that would analyze the maze and find the fastest route…and it went straight up the left side.

Claude noted this, so it cut off the left side as a candidate and tried flood-filling the interior. It then used the BFS tool to find the way through. This time, the tool used the second laziest route: going around the right side. Claude fixed this by cropping the image to only show the maze, then tried BFS one more time. Sure enough, this time, the tool worked…kind of. Claude seemed pleased to present me with the solved maze despite the fact that the tool gave up at the very end and drove through a wall, but if Claude is happy with it, then so am I.

This one had the most interesting solving process for me. It was a sign that Claude itself understood that the line had to go through the maze, but when the tool it deployed kept taking the easy route out, it would go back to the drawing board and fix the issue. Much like how I sometimes wrestle with an AI to get what I want, Claude was finagling its own algorithmic tool to work the way it wanted.

To wind down after all that hard thinking, I gave Claude an extracurricular activity to design an Easter egg. Honestly, I had zero idea what it was going to do; I simply showed it the image, told it to design an Easter egg, and let it do its thing.

Claude responded by first taking the measurements of the egg. Because it was measuring coords a ton during the last few tasks, it took barely any time to map out the inside of the egg. Once it was done, it was then used Python to generate a pleasing design in the middle. I loved it so much that I asked Claude to add its name and age to the image, which it did. Yes, it says it’s 2 years old. Adorable.

As it was thinking about what to write, it performed a task called “List available fun fonts,” and I was secretly praying it wouldn’t pick Comic Sans. Unfortunately, Claude proved to me that even AI tends to gravitate toward the siren’s song of the most infamous font in the world.

Given that Claude had managed to beat every challenge I gave it (at least, if we let the maze error slide), I decided to ask Claude itself which activity it found the most engaging. As you might imagine, it picked the one where it took about half an hour to solve a kid’s maze:

Honestly, the maze was my favorite — it was a proper puzzle that fought back. The BFS pathfinding kept finding sneaky routes around the outside of the maze instead of through it, and I had to dig into the actual pixel structure of the image to understand why. Figuring out that both the left and right outer walls had matching gaps, and that the solver was escaping through them and looping around the exterior, felt like a genuine “aha” moment. There’s something satisfying about a problem that requires real debugging rather than just following a straightforward process.

So there you have it, folks. The next time you want to supply some enrichment time for your LLM, give it a simple maze to solve. Now I see why they put these things on kids’ menus.

Well, there you have it. I put Claude Code through some trials to see if I was really underestimating its abilities in my last article, and sure enough, this LLM is a lot smarter than I originally thought. It had some great ideas on how to solve each problem and gave some very impressive results with no meddling on my end. Maybe we’ll do Where’s Waldo next.