The topic Google says it’s investigating the ‘nudify’ app problem on the Play Store is currently the subject of lively discussion — readers and analysts are keeping a close eye on developments.

This is taking place in a dynamic environment: companies’ decisions and competitors’ reactions can quickly change the picture.

Affiliate links on Android Authority may earn us a commission. Learn more.

AI technologies has been rapidly advancing over the years. Sadly, the problem of AI-generated fake nude images has been spreading just as fast. Although Apple and Google claim to crack down on harmful apps that enable this, a recent report found that their app stores host numerous “nudify” apps. Google has now offered a response to the report.

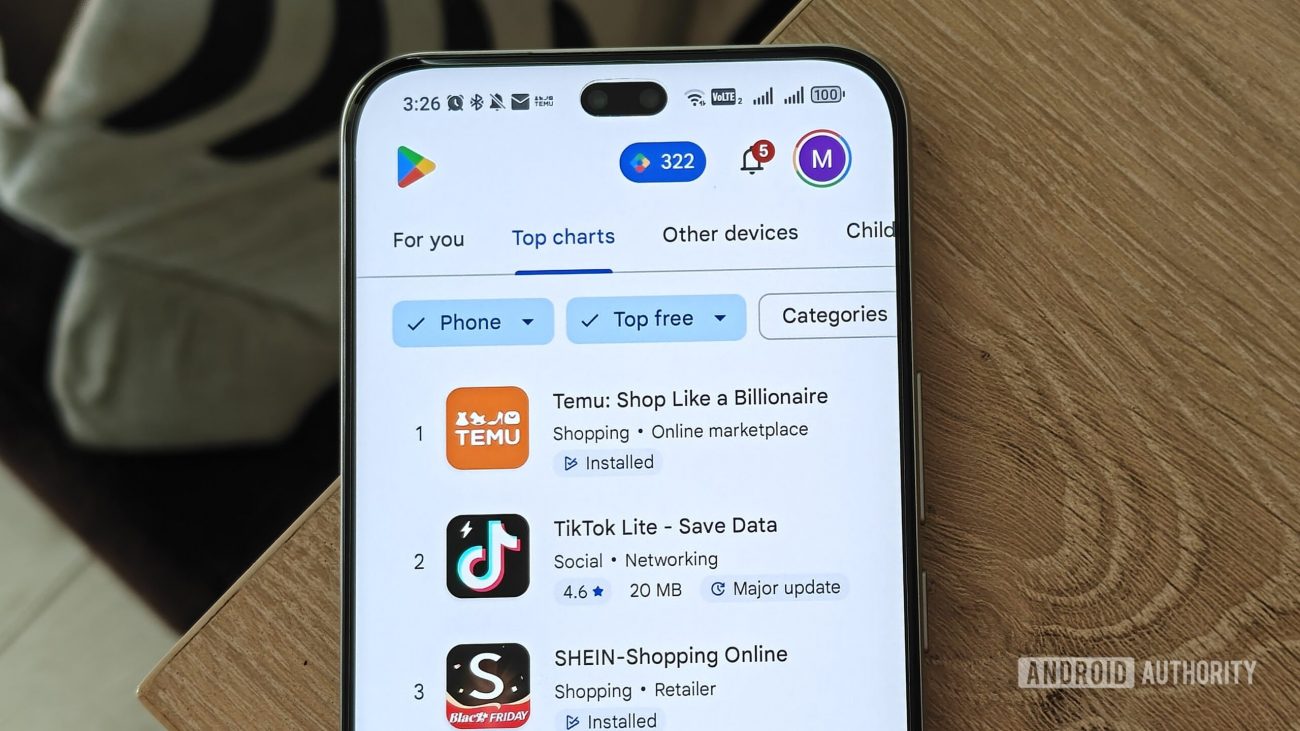

As a quick refresher, the earlier report mentions that Apple’s and Google’s policies clearly restrict apps that promote exploitation or abuse. Despite that, when you search for terms like “nudify” or “undress” on the App Store or Google Play, you’ll find dozens of these exploitative apps. Both marketplaces reportedly even advertise these tools and suggest them through autocomplete. What’s more troubling is that some are rated “E” for Everyone, so even children can legally download them.

For its part, Google tells Android Authority that it’s taking appropriate action and investigating the issue. A spokesperson for the company states:

It appears that Apple has started taking action as well. The Cupertino-based firm also responded to the earlier report, telling Bloomberg that it had removed 15 apps. For reference, the report found 20 nudify apps on the Play Store and 18 on the App Store.

Thank you for being part of our community. Read our Comment Policy before posting.