The topic One tiny change made my local LLMs more useful than ChatGPT for real work is currently the subject of lively discussion — readers and analysts are keeping a close eye on developments.

This is taking place in a dynamic environment: companies’ decisions and competitors’ reactions can quickly change the picture.

As much as I adore my local LLMs, they can’t hold a candle to the reasoning capabilities of their cloud counterparts, and for good reason. ChatGPT, Perplexity, and other AI clouds can process hundreds of billions of parameters without breaking a sweat, while my GPUs can take a few minutes to come up with answers if I try running 30B (or even 20B) models on my local LLM providers.

That said, there are a couple of ways to enhance the computing prowess of my LLMs, with retrieval augmentation generation (or RAG) being the most significant one that makes local models more effective than ChatGPT and its rival clouds.

If you’ve tried to run LLMs, you’ve at least come across a couple of scenarios where they sprout utter nonsense even after you’ve explained your query in great detail and used all the prompt optimization tips in the book. That’s called AI hallucination, and between outdated pre-trained data, contextual failure, and their tendency to generalize answers, LLMs tend to suffer from this problem, especially on low-parameter models.

That’s when retrieval-augmented generation comes in handy. Rather than relying on an LLM’s static training data, RAG lets AI models retrieve information from external sources and use it to generate responses. In simpler, local LLM terms, RAG is what lets me add a bunch of documents, images, and other information to my models, thereby helping them become more context-aware the next time I question them. Plus, it helps me increase their accuracy without scouring the web for specific models that fit into my niche tasks or painstakingly retraining their algorithms on my data.

The best part? RAG lets me feed personal information into my LLMs, including everything from simple meal analysis and code files to private documents that I’d never share with cloud-based platforms. for example, if I wanted to use local models when troubleshooting random home lab problems, I could toss all the documentation I’ve built over the years into the LLM provider and enable RAG capabilities before asking for help. This way, even the low-parameter LLMs can access information that doesn’t exist in their pre-trained sets, thereby cutting their hallucination tendencies down a notch. And unlike ChatGPT, both the AI models and the knowledge base they can harness remain on my home network, so I don’t have to worry about cloud-based clankers gaining access to personal documents.

Ollama is great for getting you started… just don’t stick around.

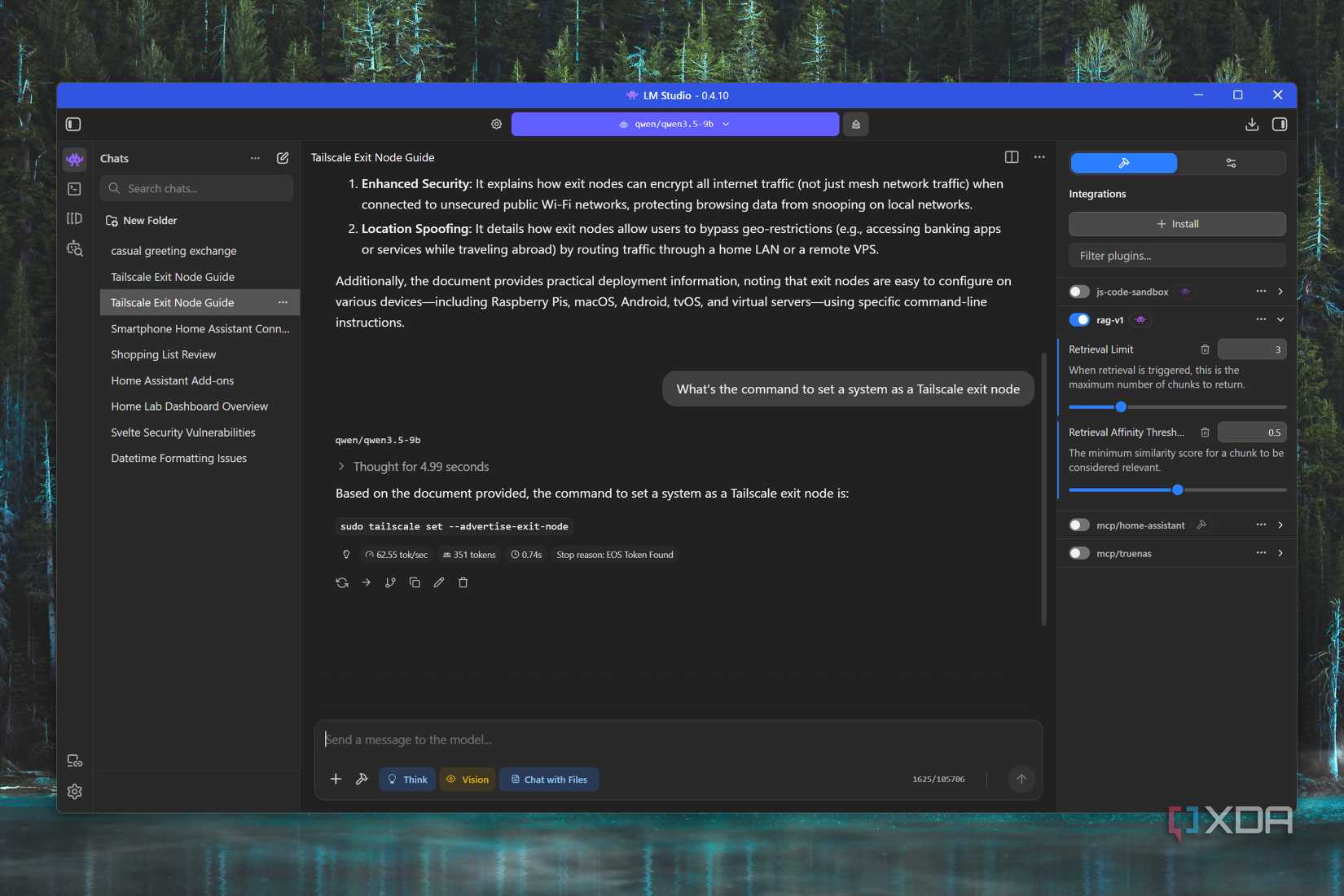

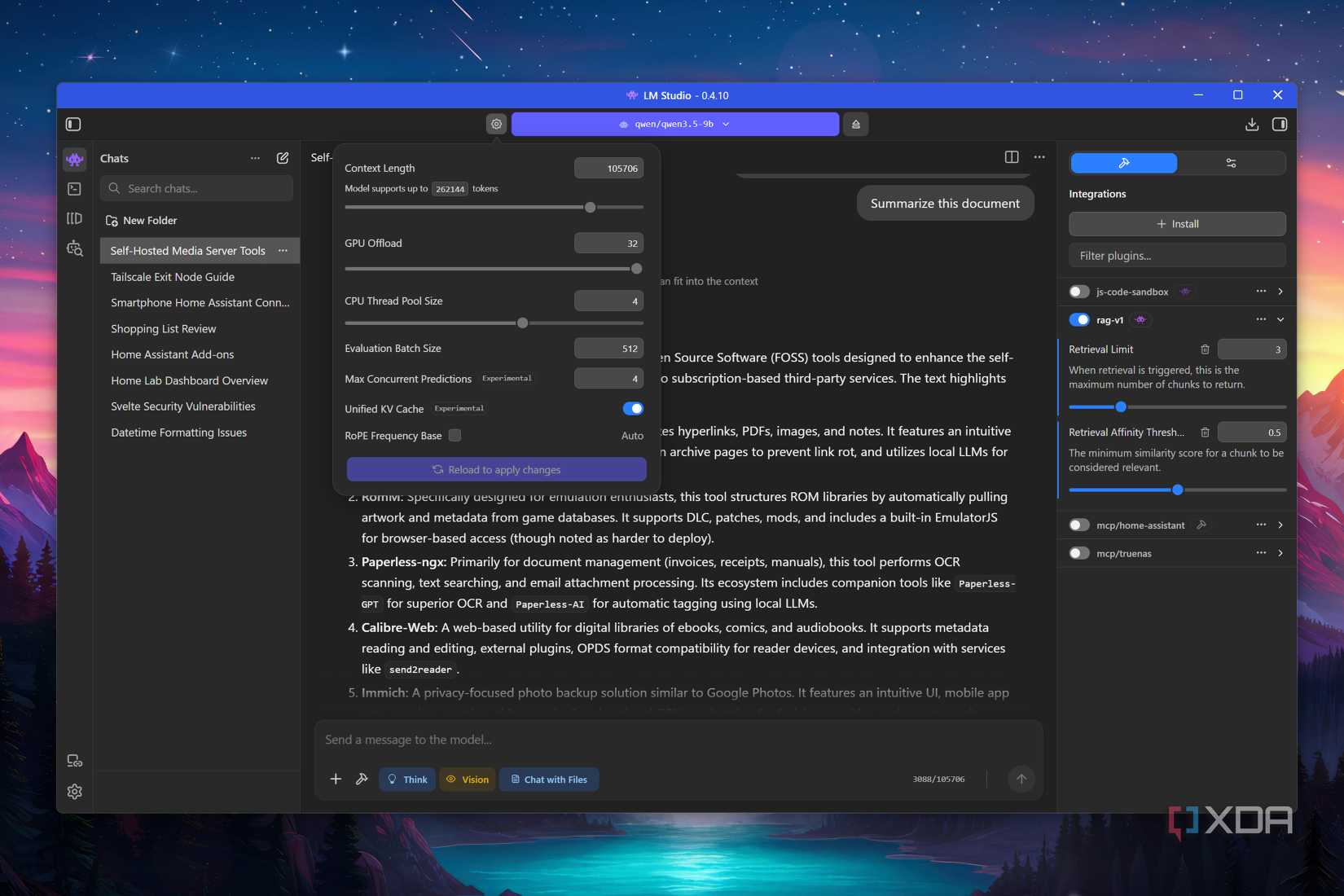

The term “retrieval-augmented generation” may sound like something overly technical that requires complex AI workflows. But rest assured, it’s really simple to implement in a completely local setup such as mine. I’ve started using LM Studio on my RTX 3080 Ti, and this local LLM provider has a handy RAG plugin built into the app. Its available under the integration section, right above all the MCP servers I use with my models. Although it currently only supports a maximum of five documents with a combined size of 30MB, it’s great for adding extra context to my LLMs.

The only caveat is that the default context length of 4096 tokens is far too low even for a single document, so I often increase it multifold before I start querying my LLM. I’ve been using 9B models on my gaming machine a lot these days, and I haven’t had any performance issues even after adding long .docx, .xls, and .pdf to my LLM chats.

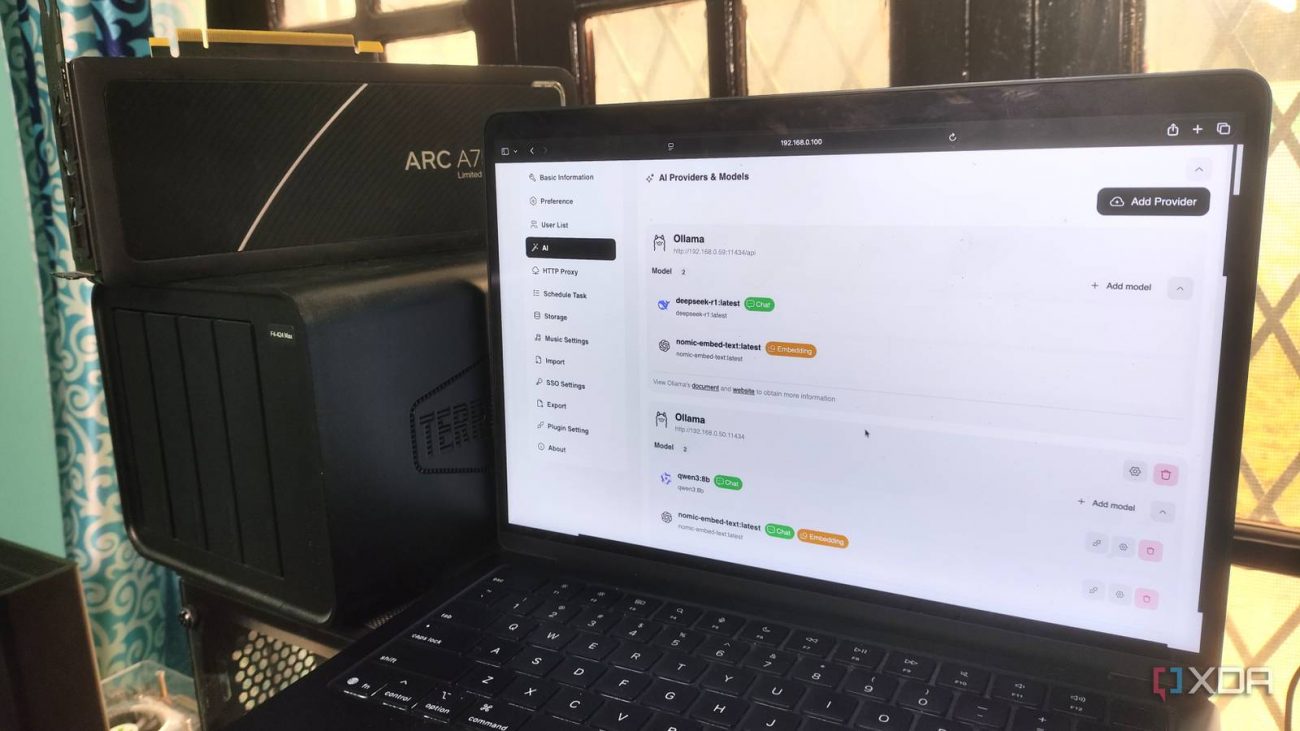

I’ve also got a bunch of FOSS services hooked up to my LM Studio models, which need dedicated embedding models for RAG capabilities. If that sounds unfamiliar, embedding models are responsible for converting typical documents into dense vector spaces and capturing the semantic meaning of text instead of relying solely on keywords. I use Nomic Embed v1 as my primary embedding model, and despite powering a bunch of tools in my home lab, it’s extremely lightweight.

for example, I use Blinko to manage my notes, and assigning Nomic Embed v1 as the embedding model on its web UI lets me use my to-do lists, blinkos, and notes as the knowledge base when chatting with LLMs. Likewise, I store my bills, academic records, invoices, product warranties, and other essential documents in my Paperless-ngx server, with Paperless AI letting me leverage my LLMs (and the embedding model) for RAG-based chats. I’ve also got a Karakeep instance running on my Proxmox server, and it supports embedding text models for its auto-tagging and summary generation tools.